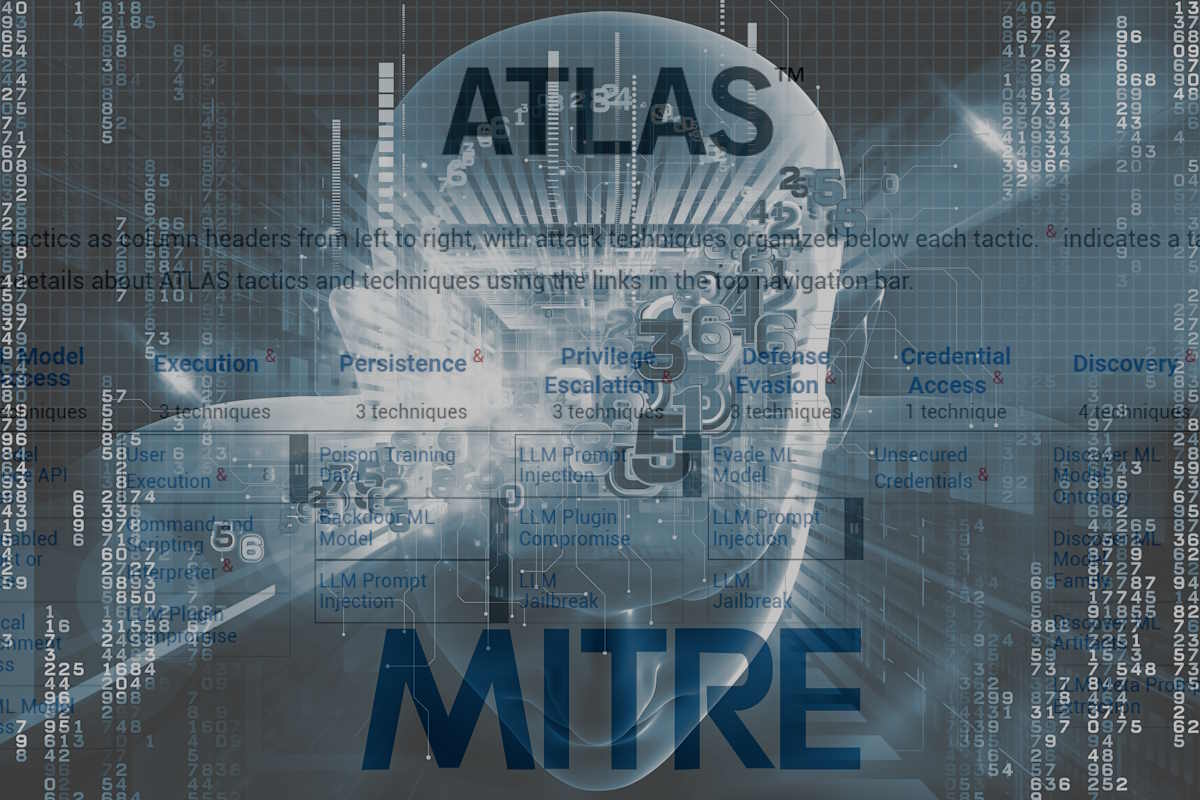

MITRE and Microsoft add data-driven generative AI to MITRE ATLAS community knowledge base

Non-profit organization MITRE and software giant Microsoft added on Monday a data-driven generative AI focus to MITRE ATLAS, a community knowledge base that security professionals, AI developers, and AI operators can use as they protect artificial intelligence (AI)-enabled systems. The framework update and associated new case studies directly address unique vulnerabilities of systems that incorporate generative AI and large language models (LLM) like ChatGPT and Bard.

The updates to MITRE ATLAS, which stands for Adversarial Threat Landscape for Artificial-Intelligence Systems, are intended to describe the increasing number and type of attack pathways in LLM-enabled systems that consumers and organizations are rapidly adopting. Such characterizations of realistic AI-enabled system attack pathways can be used to strengthen defenses against malicious attacks across a variety of consequential applications of AI, including in healthcare, finance, and transportation.

“Many are concerned about security of AI-enabled systems beyond cybersecurity alone, including large language models,” Ozgur Eris, managing director of MITRE’s AI and Autonomy Innovation Center, said in a media statement. “Our collaborative efforts with Microsoft and others are critical to advancing ATLAS as a resource for the nation.”

“Microsoft and MITRE worked with the ATLAS community to launch the first version of the ATLAS framework for tabulating attacks on AI systems in 2020, and ever since, it has become the de facto Rosetta Stone for security professionals to make sense of this ever-shifting AI security space,” according to Ram Shankar Siva Kumar, Microsoft data cowboy. “Today’s latest ATLAS evolution to include more LLM attacks and case studies underscores the framework’s incredible relevance and utility.”

MITRE ATLAS is a globally accessible, living knowledge base of adversary tactics and techniques based on real-world attack observations and realistic demonstrations from AI red teams and security groups. The ATLAS project involves global collaboration with over 100 government, academic, and industry organizations. Under that collaboration umbrella, MITRE and Microsoft have worked together to expand ATLAS and develop tools based on the framework to enable industry, government, and academia as we all work to increase the security of our AI-enabled systems.

These new ATLAS tactics and techniques are grounded in case studies from incidents users or security researchers discovered that occurred in 2023 including:

- ChatGPT Plugin Privacy Leak: Uncovered an indirect prompt injection vulnerability within ChatGPT, where an attacker can feed malicious websites through ChatGPT plugins to take control of a chat session and exfiltrate the history of the conversation.

- PoisonGPT: Demonstrated how to successfully modify a pre-trained LLM to return false facts. As part of this demonstration, the poisoned model was uploaded to the largest publicly accessible model hub to illustrate the consequences posed to the LLM’s supply chain. As a result, users who downloaded the poisoned model were at risk of receiving and spreading misinformation.

- MathGPT Code Execution: Exposed a vulnerability within MathGPT—which uses GPT-3 to answer math questions—to prompt injection attacks, allowing an actor to gain access to the host system’s environment variables and the app’s GPT-3 API key. This could enable a malicious actor to charge MathGPT’s GPT account for its use, causing financial harm, or causing a denial-of-service attack that could hurt MathGPT’s performance and reputation. The vulnerabilities were mitigated after disclosure.

Additionally, the broader ATLAS community of industry, government, academia, and other security researchers also provided feedback to shape and inform these new tactics and techniques.

The ATLAS community collaboration will now focus on incident and vulnerability sharing to continue to grow the community’s anonymized dataset of real-world attacks and vulnerabilities observed in the wild. The incident and vulnerability sharing work have also expanded to incorporate incidents in the broader AI assurance space, including AI equitability, interpretability, reliability, robustness, safety, and privacy enhancement.

The ATLAS community is actively sharing information on how to address supply chain issues, such as AI bill of materials (BOM) and model signing, as well as provenance best practices. These valuable insights can be accessed through the ATLAS GitHub page and Slack channel, which are open to the public. By utilizing these platforms, community members can exchange knowledge and discuss what strategies are currently effective within their organizations. This collaborative approach aims to enhance the alignment of AI supply chain risk mitigation practices and techniques.

U.S. President Joe Biden issued last week an Executive Order to prioritize America’s role in harnessing the potential of artificial intelligence (AI) while addressing associated risks. The Executive Order established new standards for AI safety and security, protects Americans’ privacy, and advances equity and civil rights. It also stands up for consumers and workers and promotes innovation and competition while advancing its position. With the Executive Order, the President requires that developers of the most powerful AI systems share their safety test results and other critical information with the U.S. government.

Last week, MITRE released ATT&CK v14 to include enhanced detection guidance for many techniques, expanded scope on Enterprise and Mobile, ICS (industrial control systems) assets, and mobile structured detections. In v14, MITRE expanded the number of techniques with a new easy button and added a new source of analytics. One focus of this release was Lateral Movement, which now features over 75 BZAR-based analytics! BZAR (Bro/Zeek ATT&CK-based Analytics and Reporting) is a subset of CAR analytics that enables defenders to detect and analyze network traffic for signs of ATT&CK-based adversary behavior.